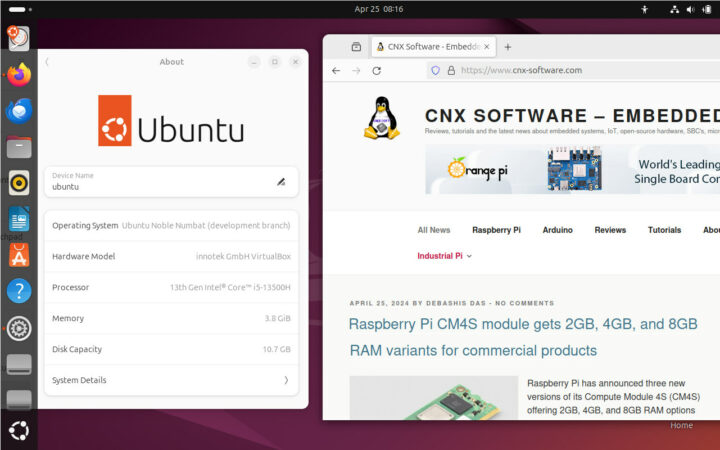

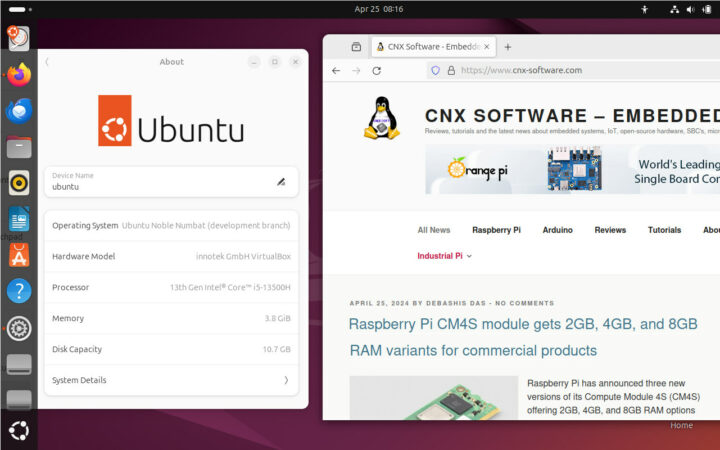

Canonical has just released Ubuntu 24.04 LTS “Noble Numbat” distribution a little over two years after Ubuntu 22.04 LTS “Jammy Jellyfish” was released. The new version of the operating system comes with the recent Linux 6.8 kernel, GNOME 46, and a range of updates and new features we’ll discuss in this post. As a long-term support release, Ubuntu 24.04 LTS gets a 12-year commitment for security maintenance and support, with five years of free security maintenance on the main Ubuntu repository, and Ubuntu Pro extending that commitment to 10 years on both the main and universe repositories (also free for individuals and small companies with up to 5 devices). This can be extended a further 2-year, or 12 years in total, for Ubuntu Pro subscribers who purchase the Legacy Support add-on. Canonical explains the Linux 6.8 kernel brings improved syscall performance, nested KVM support on ppc64el, and access to the [...]

The post Ubuntu 24.04 LTS “Noble Numbat” released with Linux 6.8, up to 12 years of support appeared first on CNX Software - Embedded Systems News.

Via: https://www.cnx-software.com/2024/04/26/ubuntu-24-04-lts-noble-numbat-released-with-linux-6-8-up-to-12-years-of-support/